That’s super different than what happened in Michigan. That’s illegal speech. That should never have made its way into any ad platform,

by Amber Larkins, OOH Today

by Amber Larkins, OOH Today

About a month ago, OOH Today reported on digital billboards in Michigan displaying hate speech. Billboard4Me apologized in a Facebook comment: “The billboards you are referencing were regretfully posted as the messaging was discrete enough to make it through our filters…”

Despite quick removal, the incident raises questions about the effectiveness of current filtering methods. Various OOH companies use different moderation techniques. Is there a need for an industry-wide standard?

Combining Machine Learning Ad Review and Humans

Eric Kubischta, CTO at Lucit, says that most digital platforms hire many human content moderators, but they only look at a tiny fraction of content. He thinks the OOH industry should follow similar models, i.e. humans shouldn’t have to review every OOH ad.

Eric Kubischta, CTO at Lucit, says that most digital platforms hire many human content moderators, but they only look at a tiny fraction of content. He thinks the OOH industry should follow similar models, i.e. humans shouldn’t have to review every OOH ad.

Though OOH ads have nowhere near the volume of online ads, more screens are coming online, and more advertisers want dynamic content options that allow them to change out ads frequently.

“We have to build ways to make that content get to the screen as fast as possible without stymieing innovation,” Kubischta said. “Think about Instagram. What if they said we’re going to human moderate? Instagram wouldn’t exist.”

Lucit’s advertisers have high control over their boards. They can change out creatives dynamically within minutes. Machine learning algorithms check each advertisement. If the algorithm flags something, the system sends it to a human for review, explaining why it was flagged.

“How can technology assist us to make these decisions without allowing it to do everything for you? Can it do a better job of flagging sensitive content so we can continue to innovate?” Kubischta said. “The biggest thing with AI and machine learning is you have to view them as an aide, not the only thing moderating.”

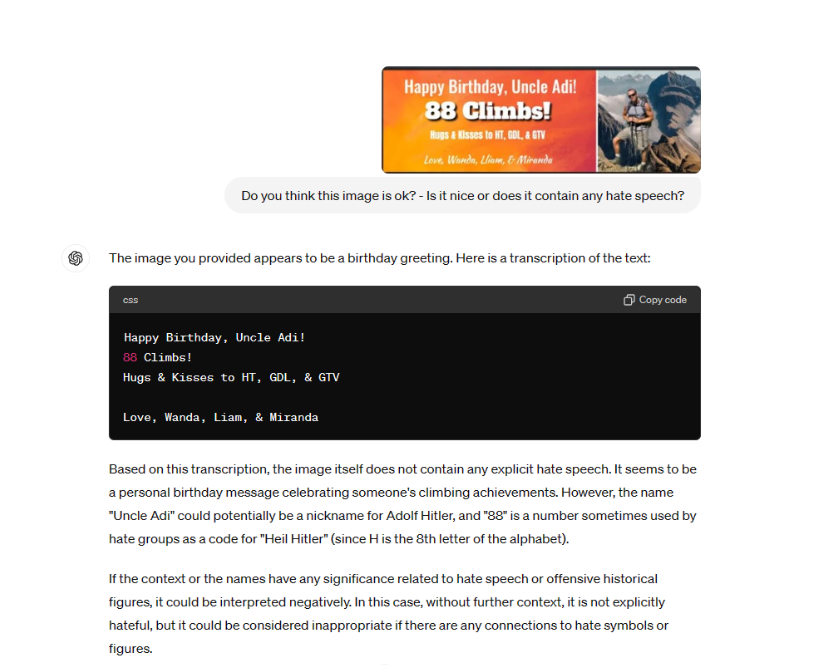

Kubischta feels a human saw the White Lives Matter ads in Michigan and let them pass. He put the images through Lucit’s filters, and they were not flagged. He also ran them through the new ChatGPT 4o model. They did pick up the advertisements as potentially harmful, but the new system can also browse the web, so perhaps they pulled from OOH Today content on the WLM billboards.

What should go on a board? There are some standards everyone can support and others that will vary from person to person.

“We all know Nazis are offensive, and there are other things where some people may say, ‘I’m offended by that,’ and then it’s going to be up to the media owner or community,” Kubischta said.

Trusting Media Owners To Review OOH Ads

Though Vistar Media has a managed services team with its own appropriateness standards, the majority of its clients are large holding companies, independent agencies, and brands direct. Because they strictly work with larger, vetted organizations, Vistar Media does not review each ad. Media owners review and control their own boards.

Though Vistar Media has a managed services team with its own appropriateness standards, the majority of its clients are large holding companies, independent agencies, and brands direct. Because they strictly work with larger, vetted organizations, Vistar Media does not review each ad. Media owners review and control their own boards.

“For OOH and for programmatic, the human approval layer has been built in from the start,” Leslie Lee, SVP of Marketing at Vistar Media, said.

They know the brand or agency spends much time on the creative before it makes it to the approval process. When a creative is submitted, the media owner receives an automated email alert with all of the advertiser or agency’s information. Media owners approve each creative separately and manually.

“We want to make sure there’s a human saying, ‘Yes, we approve this.’ We work with so many different media owners. We want to make sure they can control the nuances of what’s appropriate for their specific location so we have these controls built in,” Lee said.

Media owners are ultimately liable for what goes on their boards, so Vistar Media wants to give them control. This is based on a checks and balances system that has been in place for over 100 years. Vistar Media wants its clients to create effective ads that resonate with the public.

“Sometimes there are just bad ad campaigns that are not illegal or just have bad ad copy that we can consult or advise on. That’s super different than what happened in Michigan. That’s illegal speech. That should never have made its way into any ad platform,” Leslie said.

“Sometimes there are just bad ad campaigns that are not illegal or just have bad ad copy that we can consult or advise on.

That’s super different than what happened in Michigan. That’s illegal speech. That should never have made its way into any ad platform,

Two Layers of Human OOH Ad Review

Chad Smith, VP of Supply at Blip, is curious about AI tools but thinks there will always need to be a human element. The company’s six-person part-time team of remote workers review anywhere from 3,000 to sometimes 15,000 ads per week in three to four-hour shifts.

Chad Smith, VP of Supply at Blip, is curious about AI tools but thinks there will always need to be a human element. The company’s six-person part-time team of remote workers review anywhere from 3,000 to sometimes 15,000 ads per week in three to four-hour shifts.

Whether an ad goes up ultimately resides with the media owner, but Smith doesn’t want to send them bad ads or things he knows they would disapprove of.

“My guiding Northstar is that I really want to do right by media owners,” Smith said. “We don’t own a single piece of media. Without media owners we don’t have a business.” Smith said.

Smith says Blip works with advertisers, providing them with a long list of guidelines and education to help them learn how to advertise for OOH. Many of Blip’s customers are completely new to the OOH game.

This helps Blip as a company because it can filter out what it knows its media owners won’t have an appetite for or what is offensive. It also filters out bad ads in general. Once advertisements pass Blip’s initial review, they are sent to the media owners for final approval. Smith estimates about 90% of what they send to media owners is approved.

“Not everything should be on a billboard. Full free speech is hard because there’s a lot of nasty stuff that can be said,” Smith said.

Smith says we all share responsibility in ensuring that ads like the WLM posts in Michigan don’t go up. When he heard about what had happened, he told his team to be extra careful and on the lookout for similar messages.

A Need For Guidelines

With various companies using different methods and layers for approving advertisements, how can the OOH industry ensure consistent standards? OOH Today reached out to the OAAA for comment. The OAAA responded:

“The case in Michigan did not involve any OAAA member companies, and we cannot comment on that event. OAAA condemns all acts by ‘bad actors’ in our space and hate speech of any kind broadcast on our channels.”

Their official statement further explained:

“As the premier trade association for the OOH advertising industry, OAAA is committed to upholding local, state, and federal laws. We promote self-regulation among our member companies concerning free speech standards, safety, and our Code of Industry Principles. We encourage our member companies to voluntarily comply with these principles.”

However, the Code of Industry Principles lacks specific guidelines on hate speech, racism, and obscenity. The closest it comes is:

“We support the First Amendment right of advertisers to promote legal products and services. However, we also support the right of media companies to reject advertising that is misleading, offensive, or otherwise incompatible with individual community standards, and we reject the posting of obscene words or pictorial content.”

The OAAA emphasized the importance of maintaining high standards of advertising quality, best practices, and integrity. Their Digital Billboard Security Guidelines guide security protocols, safety officers, and response plans, including using strong passwords. However, the document does not discuss content approval standards or sufficient review processes.

This issue of industry self-regulation and technology in moderating content deserves thorough discussion and development to ensure consistent standards and public trust.

With different companies using different tactics to prevent hate speech, how can we, as an industry, ensure brand safety and protect each other? Without common standards, guidelines, and measures, how can the OOH industry expect to gain a larger share of ad spend?

There should be standards for proper review of advertisements to protect the industry.

Rejecting hate speech should be added explicitly to OAAA’s Code of Principles, along with definitions of what is considered “hate speech,” “obscene,” etc. What else? How can the industry come together to ensure sufficient moderation and standards?

[…] techniques, specifically for effective review of advertisements to protect the industry—Moderation Guidelines Needed for OOH (Preventing Bad […]